Time Value of Data: The Summit of Now and the Peak of Soon After

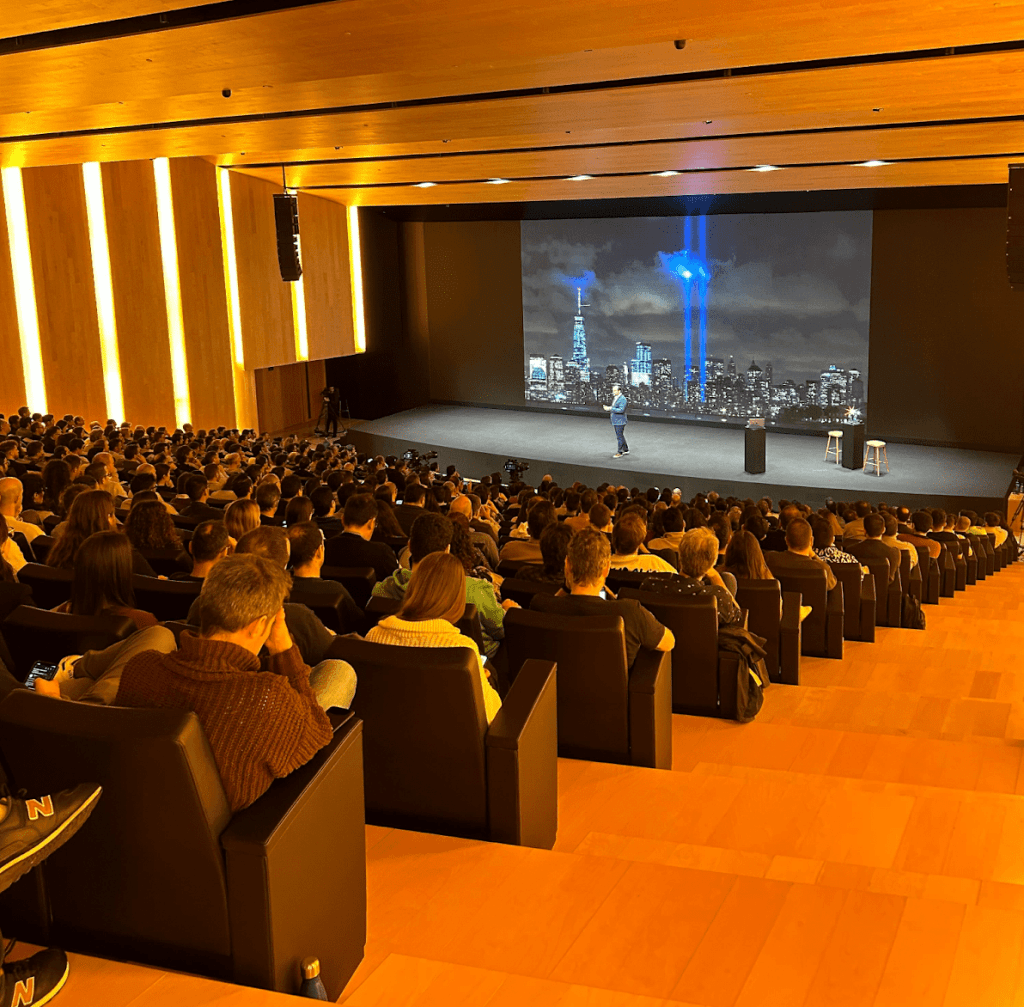

Last week I had the chance to visit a major global fashion retailer and give an industry talk on Real-time AI. This company was hosting Tech Days and invited a few of their vendors, including Snowflake, to give a talk to thousands of their tech employees joining in-person and online.

To get to their HQ I had to travel to a small town on the Galician coast of Spain. If you know, you know. The closer I got to the HQ, the more well-dressed folks I saw.

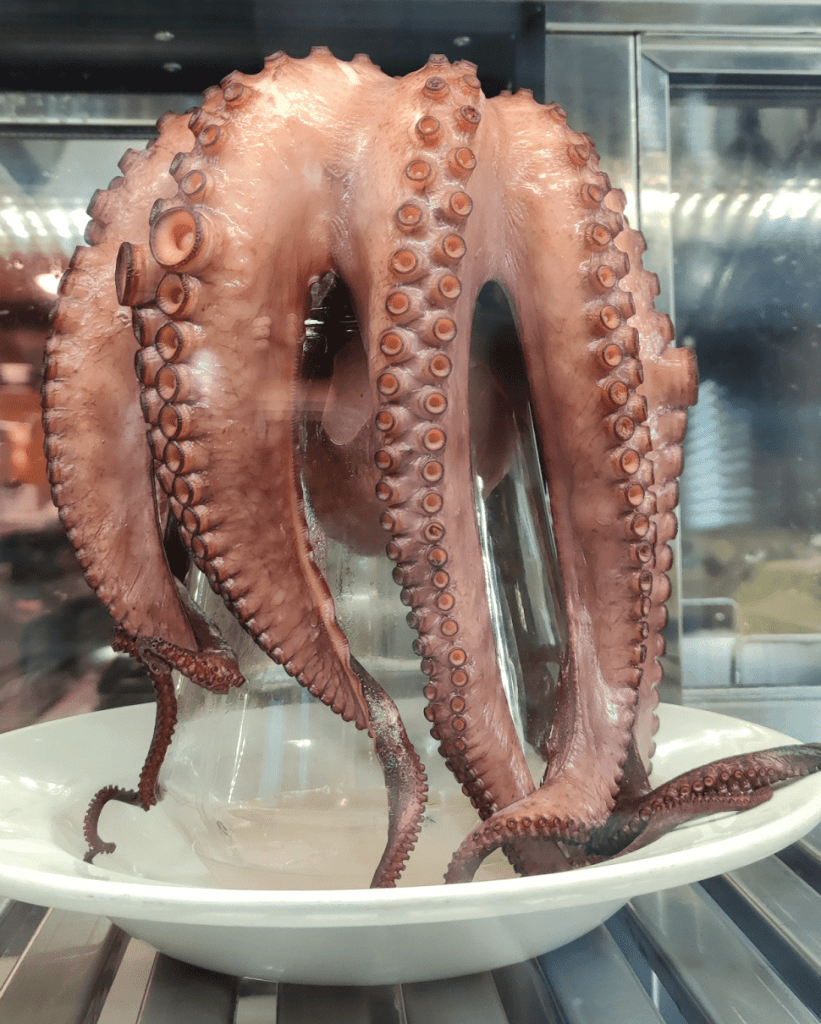

The view from my hotel room was pretty impressive. They say one must try an octopus when visiting.

A nice gesture from event organizers was giving us a tour of the Steven Meisel exhibition, who worked a lot with this fashion retailer.

The campus, located in a small Galician village where the founder of the company is from, looked like as if Apple went on vacation to Sweden and came back wanting to build a summer residence.

But now back to the talk.

A few years back I began thinking about a topic that was just emerging at the time.

I started seeing more and more companies wanting to do more with their billions of events that they collected and stored. They wanted to react to them in real-time or near real-time. And they wanted to react with almost human intelligence, instead of just counting or aggregating or storing and forgetting.

This led me to naming the three driving forces in the market at the time.

Very large # of events

Global user base creates billions upon billions of interactions with systems

Real-time

Systems must serve intelligence in or near real-time, or opportunities are lost

Near-human intelligence

Systems must be able to apply near-human intelligence to super-human volumes of data

Why are businesses interested in reacting in real-time? This is best visualized by this picture.

Time Value of Data

Imagine this mountain range of business value. The rightmost peak is the Mountain of Wisdom. This is our traditional Data Lakes or Data Warehouses that hold events created over a long period of time. You can run analytical queries on them. You can train your ML models on them. You have years and years of data there. Businesses get a lot of value from that.

But in addition to that mountain of data there is also a lot of value if you can react to an event in the same instant it happens. The traditional example for that is fraud detection and cancellation of a transaction. This is the Summit of Now.

Reacting to an event instantaneously is not always possible, but that’s ok, because there are lots of use cases where you have between several seconds and several minutes to do something smart. This is the Peak of Soon After.

And this brings us to the definition of Real-time AI, and the techniques that implement it.

Near-human Intelligence

Let’s start with the easy part. It is clear that to address the need for near-human intelligence one needs to use Machine Learning. Dealing with billions of events manually, with heuristics, just would not scale.

Real-time

On the time dimension we have two different approaches.

True Real-time: In cases when we need true Real-time, when intelligent responses need to happen in a second or less, Large-Scale Event Processing is best. Building pipelines is the wrong approach, because pipelines tend to micro-batch (yes, everyone is doing it, they just call it differently) and introduce latency. I happen to live in a country where Amazon Store cards are a bit stingy with credit. My limit is low for American standards and Amazon periodically cancels my orders when I exceed my store card limit, but they do it 1, 3, 5 minutes after they accept my order. Why they can’t check before accepting the order beats me. I sometimes imagine a Kinesis Data Firehose piping all these orders into some file, and a cron job periodically waking up and doing a micro-batch call to their Store Card bank to check the balance of all their recent customers. Not great. For best customer experience you want to be able to do inference on very small batches (if batching them at all) in the Cloud or even on the Edge, if you are in a brick-and-mortar retail or IoT situation.

Near Real-time: However, in a large class of use cases users have between several seconds to several minutes to act. For example, I know at least one Security Alarm vendor that does not react to every signal from a movement and glass-breaking sensor, or to a video camera reporting a movement. They wait multiple seconds, collecting all the signals from various rooms of the house, and then make an inference about an incident being a real case of break-and-entry or a false positive. There is a real cost to their business if they react to every false positive, and driving down the rate of false alarms keeps their costs in line. In cases like this, one can start using techniques customary to the domain of Streaming Analytics (time windows and such). One can bring different event data sources, one can even query historical data (or use it as a predictor). Using streaming pipelines or dumping raw events into Data Lakes and then doing aggregations and inference are all fine techniques here. If you feed these events into an analytical store, you even get an early start for forming your next peak in the data value mountain range – the Mountain of Wisdom!

Snowflake is moving fast to enable users to implement these Real-time and Near Real-time AI use cases. For Streaming Analytics Snowflake now offers in Public Preview Snowpipe Streaming, a streaming ingestion solution that has a high insert rate (GB/sec per table) and low latency (data is queryable in seconds, <5s being typical). We also launched in Public Preview something we call Dynamic Tables – a very simple way for defining streaming pipelines entirely in SQL, and by specifying one of the most important streaming Service Level Objectives (SLO) – the Lag (here is the Private Preview announcement). And for Machine Learning we offer compute capabilities in Snowpark and are working on much more (come to our Summit or listen to announcements online).

Happy to see that the industry is also taking this trend seriously. Perhaps you should too.